How multi-modal embedding decomposition solves the AI training-data crisis.

The AI industry is hitting the training-data wall. Epoch AI projects the ~300 trillion tokens of public human text will be exhausted by frontier models between 2026 and 2032. This paper argues the crisis is misframed: the bottleneck is not raw volume but labeled, meaningful, multi‑dimensional data.

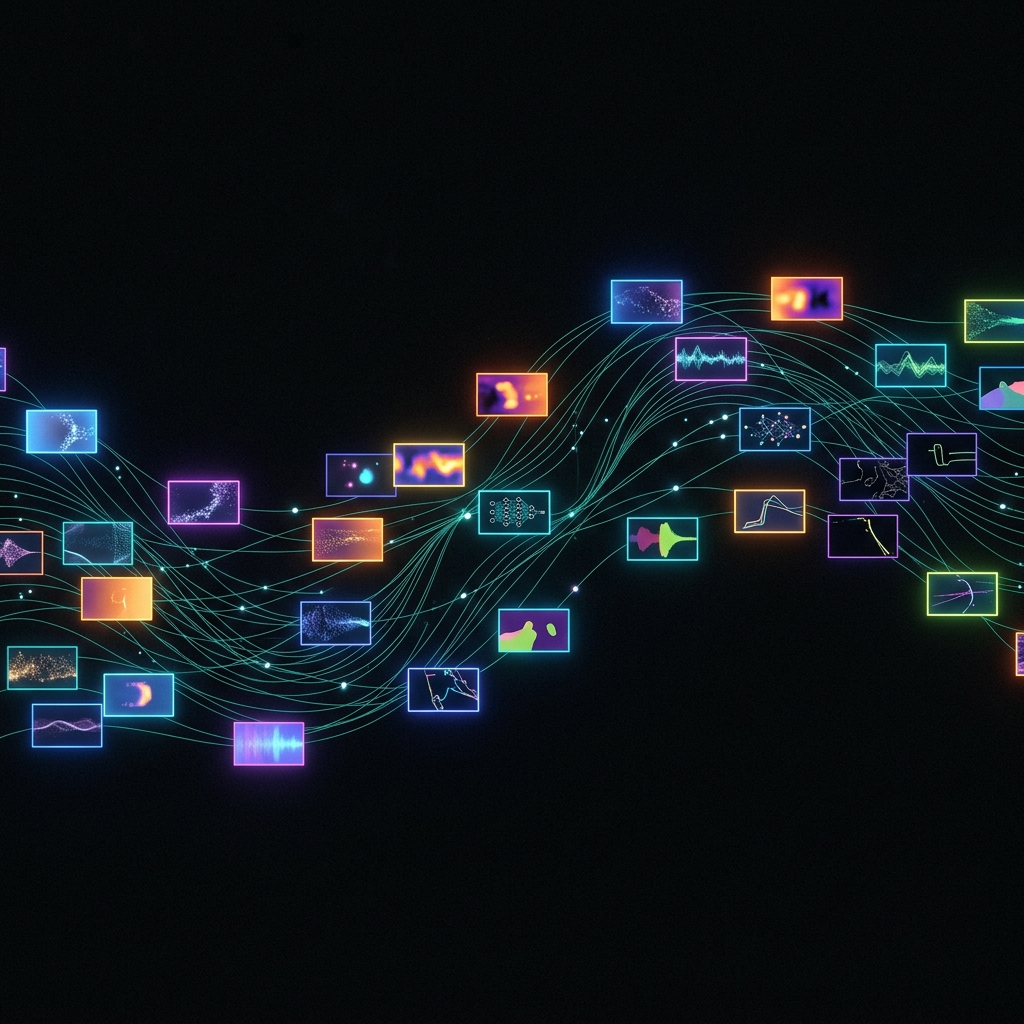

Derived Data Abundance — the principle of running existing data through N independent embedding models to produce 100x+ meaningful training signal per corpus — offers a third path past the wall. We demonstrate it through two implemented systems: ClipCannon (video, 7 modalities, 4,044 dimensions) and Context Graph (text, 13 embedders, 78 cross-correlations).

We introduce meaning compression as a new category — distinct from weight, activation, and data compression — and argue it is the missing primitive for scaling training data beyond the public-text stock. The resulting training method is Teleological Constellation Training (TCT).

The full paper preserves the author's original phrasing, including the initial 91× multiplication figure from early implementations. Current Teleox systems and external reporting use the refined figure of 100x+, scaling toward 50+ embedders.

Distinct from weight, activation, and data compression — which all reduce bits per unit of information. Meaning compression does the opposite: it increases meaningful signal per byte of raw data. That is the missing primitive for scaling training corpora past the human-text stock.

Each embedder extracts a different dimension of meaning — visual, semantic, temporal, causal, relational. All are grounded in real observations, not model outputs, so the derived data is immune to the model-collapse failure mode inherent in synthetic generation.

Training signal grows with the number of embedders applied. 13 today, with a clear engineering path toward 50+. This is the third path past the data wall — distinct from "find more raw data" and "generate synthetic data" — and the one that composes with every other axis of scaling.

Commercialized by Teleox.ai — TCT-trained LoRAs that force deterministic model outputs, built on 100x+ meaning-labeled training data.